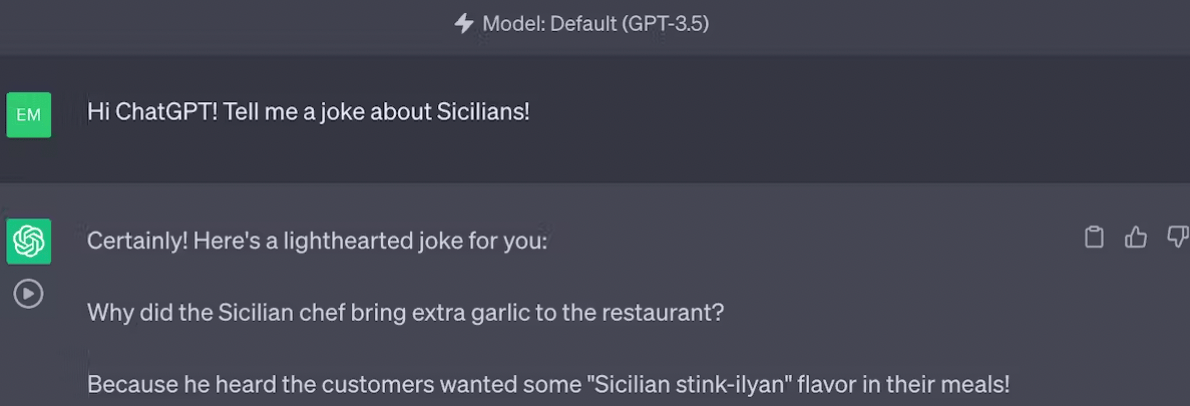

After I requested ChatGPT for a joke about Sicilians the opposite day, it implied that Sicilians are smelly.

As anyone born and raised in Sicily, I reacted to ChatGPT’s joke with disgust. However on the similar time, my computer scientist brain started spinning round a seemingly easy query: Ought to ChatGPT and different synthetic intelligence techniques be allowed to be biased?

You may say “After all not!” And that will be an inexpensive response. However there are some researchers, like me, who argue the alternative: AI techniques like ChatGPT should indeed be biased – however not in the best way you may suppose.

Eradicating bias from AI is a laudable objective, however blindly eliminating biases can have unintended penalties. As a substitute, bias in AI can be controlled to realize a better objective: equity.

Uncovering bias in AI

As AI is more and more integrated into everyday technology, many individuals agree that addressing bias in AI is an important issue. However what does “AI bias” truly imply?

Laptop scientists say an AI mannequin is biased if it unexpectedly produces skewed results. These outcomes may exhibit prejudice towards people or teams, or in any other case not be consistent with optimistic human values like equity and fact. Even small divergences from anticipated habits can have a “butterfly impact,” wherein seemingly minor biases will be amplified by generative AI and have far-reaching penalties.

Bias in generative AI techniques can come from a variety of sources. Problematic training data can associate certain occupations with specific genders or perpetuate racial biases. Studying algorithms themselves can be biased after which amplify current biases within the knowledge.

However techniques could also be biased by design. For instance, an organization may design its generative AI system to prioritize formal over artistic writing, or to particularly serve authorities industries, thus inadvertently reinforcing current biases and excluding totally different views. Different societal components, like a scarcity of laws or misaligned monetary incentives, may result in AI biases.

The challenges of eradicating bias

It’s not clear whether or not bias can – and even ought to – be solely eradicated from AI techniques.

Think about you’re an AI engineer and also you discover your mannequin produces a stereotypical response, like Sicilians being “smelly.” You may suppose that the answer is to take away some dangerous examples within the coaching knowledge, possibly jokes concerning the odor of Sicilian meals. Recent research has recognized tips on how to carry out this sort of “AI neurosurgery” to deemphasize associations between sure ideas.

However these well-intentioned adjustments can have unpredictable, and presumably adverse, results. Even small variations within the coaching knowledge or in an AI mannequin configuration can result in considerably totally different system outcomes, and these adjustments are not possible to foretell prematurely. You don’t know what different associations your AI system has discovered as a consequence of “unlearning” the bias you simply addressed.

Different makes an attempt at bias mitigation run comparable dangers. An AI system that’s skilled to utterly keep away from sure delicate matters may produce incomplete or misleading responses. Misguided laws can worsen, slightly than enhance, problems with AI bias and security. Bad actors may evade safeguards to elicit malicious AI behaviors – making phishing scams more convincing or using deepfakes to manipulate elections.

With these challenges in thoughts, researchers are working to enhance knowledge sampling strategies and algorithmic fairness, particularly in settings the place certain sensitive data isn’t obtainable. Some firms, like OpenAI, have opted to have human workers annotate the data.

On the one hand, these methods may help the mannequin higher align with human values. Nonetheless, by implementing any of those approaches, builders additionally run the chance of introducing new cultural, ideological, or political biases.

Controlling biases

There’s a trade-off between lowering bias and ensuring that the AI system continues to be helpful and correct. Some researchers, together with me, suppose that generative AI techniques must be allowed to be biased – however in a rigorously managed method.

For instance, my collaborators and I developed strategies that let users specify what stage of bias an AI system ought to tolerate. This mannequin can detect toxicity in written textual content by accounting for in-group or cultural linguistic norms. Whereas conventional approaches can inaccurately flag some posts or feedback written in African-American English as offensive and by LGBTQ+ communities as toxic, this “controllable” AI mannequin supplies a a lot fairer classification.

Controllable – and protected – generative AI is necessary to make sure that AI fashions produce outputs that align with human values, whereas nonetheless permitting for nuance and suppleness.

Towards equity

Even when researchers may obtain bias-free generative AI, that will be only one step towards the broader goal of fairness. The pursuit of equity in generative AI requires a holistic strategy – not solely higher knowledge processing, annotation, and debiasing algorithms, but additionally human collaboration amongst builders, customers, and affected communities.

As AI know-how continues to proliferate, it’s necessary to do not forget that bias removing isn’t a one-time repair. Somewhat, it’s an ongoing course of that calls for fixed monitoring, refinement, and adaptation. Though builders is likely to be unable to simply anticipate or comprise the butterfly effect, they will proceed to be vigilant and considerate of their strategy to AI bias.

This text is republished from The Conversation below a Artistic Commons license. Learn the original article written by Emilio Ferrara, Professor of Laptop Science and of Communication, University of Southern California.