Google’s DeepMind and Google Cloud revealed a brand new software that may assist it to higher establish when AI-generated pictures are being utilized, based on an August 29 blog post.

SynthID, which is presently in beta, is geared toward curbing the unfold of misinformation by including an invisible, everlasting watermark to photographs to establish them as computer-generated. It’s presently obtainable to a restricted variety of Vertex AI prospects who’re utilizing Imagen, one in every of Google’s text-to-image turbines.

This invisible watermark is embedded instantly into the pixels of a picture created by Imagen and stays intact even when the picture undergoes modifications corresponding to filters or shade alterations.

Past simply including watermarks to photographs, SynthID employs a second method the place it could possibly assess the probability of a picture being created by Imagen.

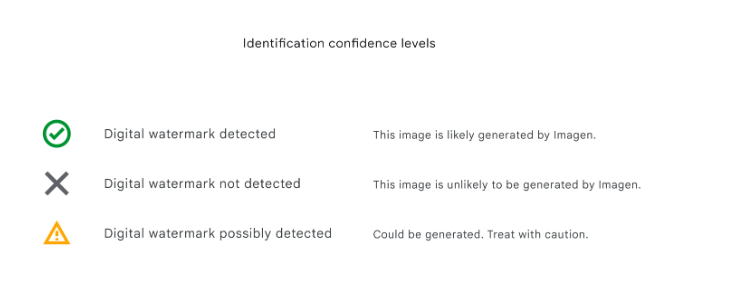

The AI software gives three “confidence” ranges for deciphering the outcomes of digital watermark identification:

- “Detected” – the picture is probably going generated by Imagen

- “Not Detected” – the picture is unlikely to be generated by Imagen

- “Presumably detected” – the picture may very well be generated by Imagen. Deal with with warning.

Within the weblog put up, Google talked about that whereas the expertise “isn’t good,” its inner software testing has proven accuracy in opposition to widespread picture manipulations.

As a result of developments in deepfake expertise, tech firms are actively in search of methods to establish and flag manipulated content material, particularly when that content material operates to disrupt the social norm and create panic – such because the faux picture of the Pentagon being bombed.

The EU, in fact, is already working to implement expertise via its EU Code of Practice on Disinformation that may acknowledge and label this kind of content material for customers spanning Google, Meta, Microsoft, TikTok, and different social media platforms. The Code is the primary self-regulatory piece of laws supposed to encourage firms to collaborate on options to combating misinformation. When it first was launched in 2018, 21 companies had already agreed to decide to this Code.

Whereas Google has taken its distinctive method to addressing the problem, a consortium referred to as the Coalition for Content material Provenance and Authenticity (C2PA), backed by Adobe, has been a frontrunner in digital watermark efforts. Google beforehand launched the “About this picture” software to supply customers details about the origins of pictures discovered on its platform.

SynthID is simply one other next-gen technique by which we’re in a position to establish digital content material, appearing as a sort of “improve” to how we establish a chunk of content material via its metadata. Since SynthID’s invisible watermark is embedded into a picture’s pixels, it’s appropriate with these different picture identification strategies which can be primarily based on metadata and remains to be detectable even when that metadata is misplaced.

Nonetheless, with the speedy development of AI expertise, it stays unsure whether or not technical options like SynthID will probably be utterly efficient in addressing the rising problem of misinformation.

Editor’s word: This text was written by an nft now employees member in collaboration with OpenAI’s GPT-4.